The

2023

Benchmark

survey

of

security

pros

worldwide

found

that

companies

are

taking

action

on

customer

privacy,

but

transparency

is

key.

Anthony

Brown/Adobe

Stock

Cisco’s

2023 Data

Privacy

Benchmark

Study

found

that

companies

that

invest

in

closing

the

gap

are

benefitting:

The

study

found

that

the

estimated

dollar

value

of

benefits

from

privacy

rose

more

than

13%

in

2022

to

$3.4

million

from

$3.0

million

the

year

before,

with

significant

gains

across

the

various

organization

sizes.

However,

92%

of

respondents

said

their

companies

need

to

do

more

to

protect

consumer

data.

This

finding

is

about

the

same

as

last

year,

when

90%

of

respondents

expressed

that

opinion.

SEE:

New

cybersecurity

data

reveals

persistent

social

engineering

vulnerabilities

(TechRepublic)

Jump

to:

Cisco:

Investing

in

protecting

consumers’

privacy

pays

dividends

Cisco’s

sixth

annual

2023

benchmark

is

a

double-blind

study

based

on

a

survey

of

over

4,700

security

professionals

in

26

countries.

This

survey

found

that,

economic

headwinds

notwithstanding,

organizations

are

investing

in

privacy,

with

spending

up

significantly

from

$1.2

million

just

three

years

ago

to

$2.7

million

this

year,

on

average,

which

is

a

125%

increase.

A

Cisco

blog

about

its

2023

Data

Privacy

Benchmark

Survey

said

its

estimated

$3.4

million

value

of

benefits

from

privacy

initiatives

constituted

1.8

times

spending

on

privacy,

with

36%

of

organizations

getting

returns

at

least

twice

their

spending.

The

study

noted

that

30%

of

respondents

in

the

U.S.

identified

privacy

as

a

job

responsibility

(Figure

A).

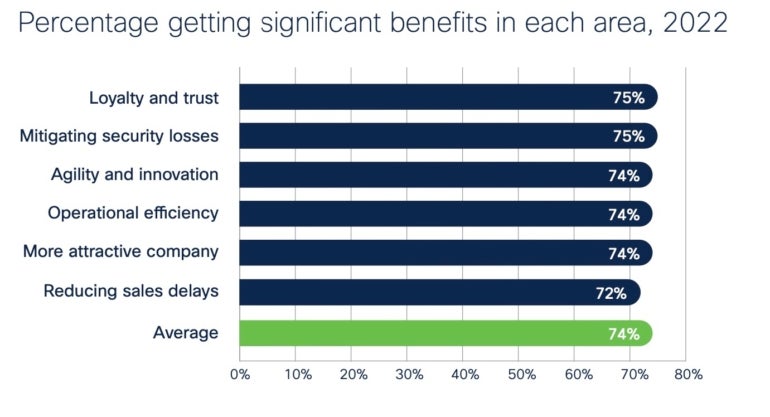

Figure

A

Cisco.

Organizations

recognizing

the

business

benefits

of

privacy

investments.

Nearly

three

quarters

of

organizations

Cisco

surveyed

said

they

were

getting

“significant”

or

“very

significant”

benefits

from

privacy

investments.

These

included

building

trust

with

customers,

reducing

sales

delays

and

mitigating

losses

from

data

breaches.

Some

94%

of

all

respondents

indicated

they

believe

the

benefits

of

privacy

outweigh

the

costs

overall.

Almost

all

companies

reporting

privacy

metrics

to

leadership

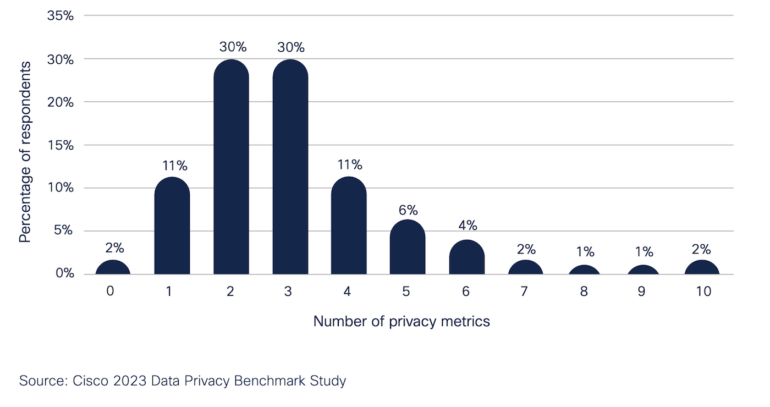

Almost

every

organization

(98%)

said

they

are

reporting

one

or

more

privacy-related

metrics

to

the

board

of

directors,

per

Cisco,

with

the

average

number

of

privacy

metrics

at

3.1,

up

from

the

2.6

metrics

reported

in

last

year’s

benchmark

survey

(Figure

B).

Figure

B

Cisco.

Number

of

privacy

metrics

reported

to

board

of

directors.

The

most-reported

privacy

metrics

include:

-

The

status

of

any

data

breaches. -

Impact

assessments. -

Incident

response.

Privacy

legislation

continues

to

be

very

well-received

around

the

world.

Seventy-nine

percent

of

all

corporate

respondents

said

privacy

laws

have

had

a

positive

impact,

and

only

6%

indicated

that

the

laws

have

had

a

negative

impact.

Companies

can

do

more

to

reassure

customers

about

privacy

Ninety-two

percent

of

respondents

to

Cisco’s

survey

said

their

organizations

need

to

do

more

to

reassure

customers

about

their

data,

and

organizations’

privacy

priorities

differ

with

those

expressed

by

consumers.

The

study

also

found:

-

90%

said

global

providers

are

better

able

to

protect

their

data

compared

with

local

providers. -

94%

of

organizations

said

their

customers

won’t

buy

from

them

if

data

is

not

properly

protected. -

95%

said

all

their

employees

need

to

know

how

to

protect

data

privacy.

Still,

a

look

at

Cisco’s

2022

Consumer

Privacy

Survey

suggests

a

persistent

disconnect

between

data

privacy

measures

by

companies

and

what

consumers

expect

from

organizations,

especially

regarding

how

organizations

apply

and

use

artificial

intelligence

(Figure

C).

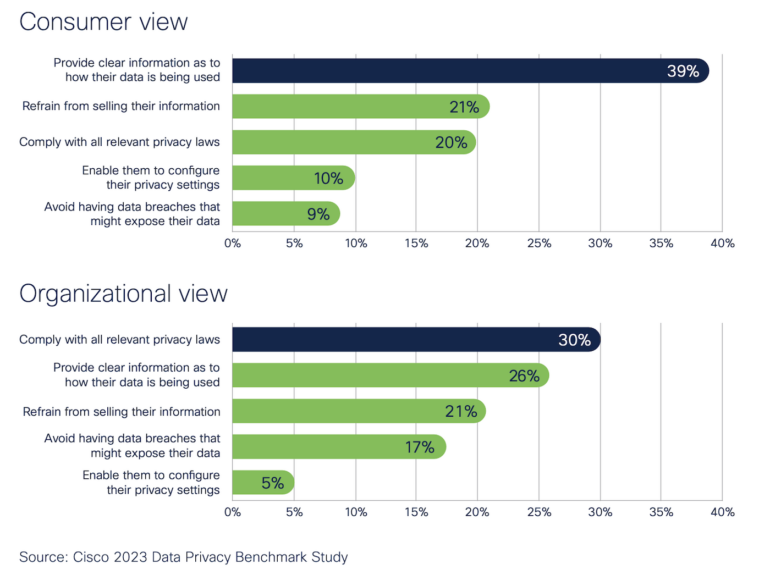

Figure

C

Cisco.

Priorities

for

building

consumer

trust

from

consumer

and

company

points

of

view.

Consumers

are

willing,

sort

of,

to

give

AI

the

benefit

of

the

doubt

Last

year,

Cisco

issued

a

responsible

AI

framework

and

principles

for

responsible

AI,

in

which

the

company

stated

it

“informs

customers

when

AI

is

being

used

to

make

decisions

that

affect

them

in

material

and

consequential

ways.

Customers

and

users

can

then

inform

us

of

their

concerns

or

let

us

know

when

they

disagree

with

decisions.”

SEE:

How

does

data

governance

affect

data

security

and

privacy?

(TechRepublic)

In

the

2022

Consumer

Privacy

Survey

referenced

above,

Cisco

reported

that

consumers

showed

some

willingness

to

share

data

for

AI

models

but

are

dubious

on

how

that

data

will

be

used:

-

43%

of

consumers

said

they

thought

AI

could

be

useful. -

54%

were

willing

to

share

anonymized

personal

data

to

improve

AI

products.

However:

-

60%

expressed

concern

about

the

business

use

of

AI. -

65%

said

they

had

already

lost

trust

in

organizations

over

their

AI

practices.

Consumers

also

said

the

top

approach

for

making

them

more

comfortable

would

be

to

provide

opportunities

for

them

to

opt

out

of

AI-based

solutions.

Only

21%

of

organizations

participating

in

this

year’s

2023

privacy

benchmark

survey

said

they

would

put

in

place

ways

for

consumers

to

opt

out

of

AI

opportunities.

How

organizations

are

mediating

the

AI

relationship

Cisco’s

2023

privacy

study

suggests

that

organizations

are

taking

steps

to

make

consumers

more

comfortable

with

AI:

-

63%

of

organizations

said

they

are

ensuring

a

human

is

involved

in

the

process. -

60%

said

they

are

explaining

how

the

AI

application

works. -

55%

are

adopting

AI

ethics

principles. -

53%

said

they

are

applying

an

AI

ethics

management

program

to

identify

and

reduce

unintended

bias. -

47%

said

they

are

auditing

for

bias.

Cisco

recommends

baking

in

transparency,

privacy

and

AI

ethics

With

exponential

increases

in

the

use

of

AI-driven

metrics

and

business

intelligence,

based

on

the

study’s

findings,

Cisco

has

recommendations

toward

improving

organizations’

trustworthiness

and

maximizing

the

benefits

of

privacy

investments:

-

Invest

in

privacy

throughout

the

organization,

especially

among

security

and

IT

professionals

and

those

involved

directly

with

personal

data

processing

and

protection. -

Bake

transparency

into

customer

communications

around

how

customers’

personal

data

is

being

used.

Pointedly,

compliance

should

be

considered

table

stakes

and

transparency

a

business

imperative

because

it

is

the

key

to

trust,

per

Cisco. -

Ensure

that

AI

design

walks

lockstep

with

AI

ethics

principles,

and

that

there

are

preferred

management

options

to

reassure

customers,

deliver

greater

transparency

to

the

automated

decision

and

ensure

that

a

human

is

involved

in

the

process

when

the

decision

is

consequential

to

a

person. -

Consider

the

costs

and

consequences

of

data

localization

and

recognize

that

local

providers

may

be

more

expensive

and

degrade

the

functionality,

privacy

and

security

of

data

than

global

providers

operating

at

scale.

Read

next:

Artificial

intelligence

ethics

policy

(TechRepublic

Premium)