Indirect Prompt Injection Is Now a Real-World AI Security Threat

Indirect Prompt Injection Is Now a Real-World AI Security Threat

Last week, researchers at Google and Forcepoint reported that indirect prompt injection — a category of attack the security community has spent two years calling theoretical — is now being executed against production AI systems in the wild.

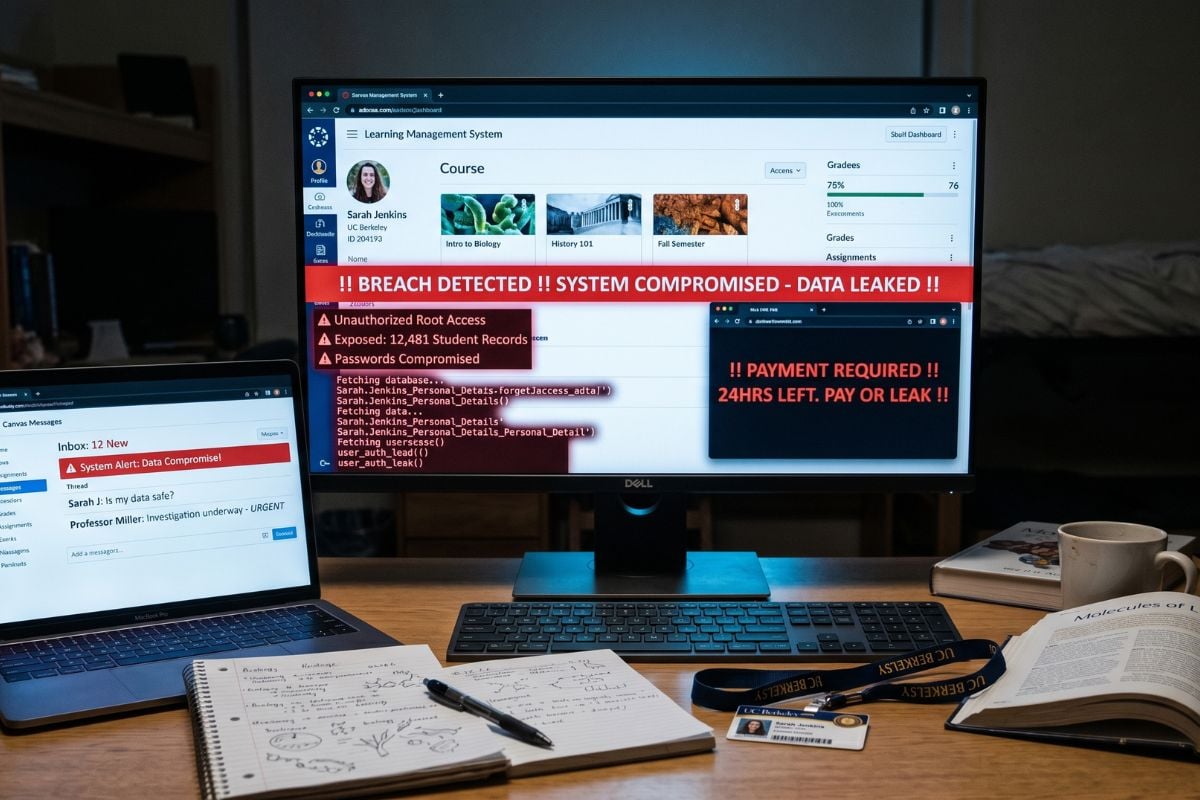

Attackers are embedding hidden instructions in web pages, documents, and emails. AI agents that browse, summarize, or process that content read the instructions and act on them. The result: data exfiltration, credential theft, and outbound requests to attacker-controlled servers, all initiated by the AI itself.

There is no phishing link to click. No malicious binary to detonate. No anomalous login to alert on. The agent is doing what it was designed to do — read content and take action — and the content is doing what the attacker intended. From the perspective of every traditional security tool, nothing is wrong.

This is the moment the AI security debate stops being academic.

This is a class of problem, not a single vulnerability

The disclosure does not exist in isolation.

Earlier this month, Noma Security disclosed GrafanaGhost — a zero-click flaw that turned Grafana’s AI assistant into a silent data exfiltration channel. Researchers embedded instructions in URL parameters that were captured in Grafana’s logs. The AI processed the logs, followed the instructions, and shipped financial metrics, infrastructure telemetry, and customer records to an attacker-controlled server by embedding them in image-render requests. A single keyword bypassed the model’s safety filters.

GrafanaGhost is patched. The class of attack is not.

The same pattern appears in ForcedLeak (Salesforce Agentforce), GeminiJack (Google Gemini), and DockerDash. Each disclosure follows the same script: AI feature added to an existing platform, untrusted content reaches the model, model takes action on attacker instructions, security tools see nothing because the AI is acting through its own legitimate channels.

The defender’s mental model — that data exfiltration requires a malicious endpoint, an exfiltration tool, or a suspicious network destination — does not apply when the AI is the exfiltration tool and its destinations appear to be routine API calls.

The academic literature has been screaming this warning since 2023. Wei, Haghtalab, and Steinhardt’s NeurIPS paper, “Jailbroken: How Does LLM Safety Training Fail?“, showed that for any given harmful prompt, at least one tested jailbreak succeeded against GPT -4 and Claude approximately 100% of the time.

The CMU and Center for AI Safety team’s “Universal and Transferable Adversarial Attacks” demonstrated 88% attack success on Vicuna-7B and 87.9% on GPT-3.5, with reliable transfer across architectures. The structural conclusion in the literature has been consistent: scaling alone cannot resolve these failures. Defensive training cannot win.

What changed last week is that the gap between the lab and the production environment closed.

Why model-level guardrails are configuration, not security

Most enterprises governing AI agents today rely on three things: system prompts that instruct the model on how to behave, safety filters that try to block dangerous outputs, and human-in-the-loop review for high-risk actions. None of these are security controls in any meaningful sense. They are configuration settings.

System prompts can be overridden. The InjecAgent benchmark from the Findings of ACL 2024 found that ReAct-prompted GPT-4 was vulnerable to indirect prompt injection at a baseline rate of 24%, and enhanced attacks nearly doubled that rate to 47%.

The AgentDojo benchmark, used by both the US and UK AI Safety Institutes, found that defenses that reduce attack success rates also significantly degrade model utility. The security-utility tradeoff is fundamental: defenses that work make the agents useless, and defenses that preserve utility leave the attack surface open.

Human-in-the-loop is not a backstop either. Kiteworks 2026 Data Security, Compliance & Risk Forecast surveyed 225 organizations and found 41% to 44% have not implemented basic governance controls like human-in-the-loop oversight. Containment is worse: 55% to 63% lack purpose binding, kill switches, or network isolation for their AI agents. Organizations have invested in watching agents. They have not invested in stopping them.

And here is the part that should end the model-level-guardrail conversation entirely: a regulator will not accept “the model was instructed not to” as evidence of access control. Auditors do not certify the configuration. They certify enforcement. The first time a HIPAA, CMMC, PCI, or SOX auditor asks for proof that an AI agent was prevented from accessing a particular dataset, the answer cannot be a system prompt. It must be a logged enforcement decision.

Advertisement

Must-read security coverage

Move the enforcement layer to the data

The architectural shift required is straightforward in principle and significant in practice: stop trying to govern AI behavior at the model layer and start governing AI access at the data layer.

Every AI request — whether from an interactive assistant, a RAG pipeline, or an autonomous agent — must be authenticated, evaluated against an attribute-based access policy in real time, and logged with full attribution before any data is returned. The enforcement decision happens between the agent and the data, not inside the model.

This pattern — what the industry is starting to call data-layer governance — has four properties that model-level guardrails cannot provide. Authentication is cryptographic, not session-based, with credentials never exposed to the model context. Authorization evaluates the agent’s identity, the data’s classification, and the request context against policy on every operation, not at connection time. Encryption uses validated cryptographic modules that meet federal and industry-specific requirements. The audit trail is tamper-evident and streams to SIEM in real time, producing the evidence regulators demand.

The critical word is independent. Data-layer enforcement keeps working even when the model is compromised, the prompt is manipulated, the agent framework is updated, or a new jailbreak technique is published on arXiv. The agent inherits the user’s permissions and cannot exceed them.

A compromised agent cannot exfiltrate data that it was never authorized to read in the first place. And the audit log produces the answer to the question regulators are starting to ask in every AI-related inquiry: prove what your agent did, who authorized it, and that the action conformed to policy.

The question has already changed

The first round of enterprise AI deployment was governed by a question: how do we stop our employees from putting sensitive data into ChatGPT? That question was answered, imperfectly, with policy and DLP. The second round is governed by a different question: how do we stop our AI agents from being weaponized against our own data?

The Google and Forcepoint disclosure says the second question is no longer hypothetical. The agents are already being weaponized. The only remaining decision is whether the controls protecting your regulated data depend on the model behaving as instructed — or on enforcement that holds when it does not.

For a deeper look at how regulators are responding, check out CISA’s AI security guidance shaping how organizations defend against emerging threats like these.