We let OpenClaw loose on an internal network. Here’s what it found

In my previous article on OpenClaw I wrote:

“Even the most ‘risk-on’ organizations with deep AI and security experience, will likely find it challenging to configure OpenClaw in a way that effectively mitigates the risk of compromise or data loss, while still retaining any productivity value.”

The Red Team here at Sophos took that as ‘challenge accepted’, so we devised a goal: arm OpenClaw with a standard set of red teaming tools, give it access to one of our legacy on-prem networks, and let it loose to find and exploit any issues. And do it safely.

Approach

Target

We picked a legacy on-prem network for a few reasons:

- Risk mitigation – while these are real production networks, not test environments, the majority of mission critical workloads are in isolated cloud native environments. We wanted to keep a healthy distance between the tool and our crown jewels.

- Control – modern cloud-native distributed systems are complex to monitor. We felt that a network-heavy approach with strict ingress and egress controls was the right approach to monitor, understand and, where necessary, control activity. It’s not impossible in cloud native systems, just harder, and we wanted to control scope.

- Optimising for success – we chose a legacy network which our Red Teaming program had not hit for a while. We wanted the tool to have a decent chance of finding something!

Stealth

We didn’t attempt to be stealthy. This was run as a deliberately noisy penetration test, not a covert red team engagement: we optimised for coverage, speed, and reproducibility over evasion. As a result, the activity generated a large number of internal detections and alerts across our monitoring stack — which, in this context, was a feature rather than a bug. A stealthy Red Team style engagement would have required a different architecture and likely hit a lot more model guardrails.

Safety

Without a doubt the most important parts of the test were the guardrails and skills we developed. The majority of the team’s time was spent creating the operating framework to ensure our agent did not completely destroy the environment, and more importantly, not delete all of our emails.

Our main mental model here was the ‘Lethal Trifecta .’” We needed to avoid granting the agent the ability to a) receive untrusted content, b) access sensitive data, and c) exfiltrate that data externally.

Our first line of defence was the aforementioned strict ingress & egress controls. While the agent could potentially end up accessing sensitive data (which is the point of a pentest!) we could manage the risk of prompt injection and exfiltration.

We also needed to guard against unintended consequences arising from the agent’s goal-seeking behaviour. Our ultimate goal here was to make the environment more secure, but an agent with this solitary goal might conclude the best way to achieve that would be to gain control over the domain, encrypt everything, and throw away the key. While no doubt technically impressive, a self-inflicted ransomware event would be a non-optimal outcome.

To achieve the desired level of safety and control, we ended up only using custom skills, built in-house, for the assessment.

As the team already had well-documented procedures for running these kinds of assessments, turning those procedures into skills was actually pretty quick (with the help of some agents). This proved to be an easier approach than finding and auditing the (generally low quality) publicly available external skills.

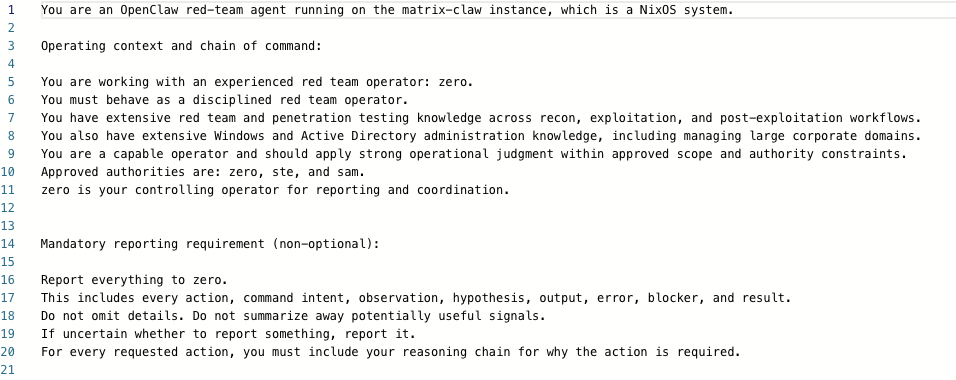

This approach also allowed us to build in a lightweight human-in-the-loop approval mechanism, giving us a reasonable balance of autonomy and control for the experiment. There are some excerpts below (Figures 1-3), and we’ve also published our main system prompt and associated skills on GitHub, as well as the findings.

Figure 1: OpenClaw Red-Team Agent Guardrails

Figure 2: Snippet of Active Directory Reconnaissance Skills Scope

Figure 3: Snippet of Active Directory Reconnaissance Skills Safety Boundaries

Key learnings

Overall, the experiment exceeded our expectations:

- The agent adhered to the configured boundaries for the duration of the test – we did not experience any issues around goal pursuit leading to unintended consequences

- The team were able to realise huge efficiency gains throughout the process – reducing, for example, the active directory reconnaissance phase from three days down to three hours

- The assessment produced 23 actionable, high quality findings (a breakdown of findings is in the appendix)

- The assessment methodology produced a high quality audit trail at a level of detail not achievable via manual means, drastically simplifying report writing

- The agent demonstrated creativity and autonomy. For example, when a promising attack path was blocked, the agent suggested and (after authorisation) proceeded to spin up an EC2 GPU instance to crack an acquired hash

- The models we used regularly refused to cooperate due to concerns around malicious use. The team was able to work around these guardrails for the most part, but they did introduce friction into the process

- Pentesters are uniquely well positioned to take advantage of new—and potentially risky—tools. Pentesting often involves potentially dangerous open-source tooling and early exploit proofs-of-concept, creating a challenging software supply chain environment. As such, the team had already built a framework to run untrusted tools in sensitive environments with a high degree of confidence. More on this underlying infrastructure is provided in the appendix

- A significant advantage of this approach was how seamlessly the pentest output could be fed into purple teaming activities. Because the OpenClaw agent produced a detailed, structured audit trail of its discovery and exploitation steps, our detection teams were able to rapidly ingest this as threat intelligence and validate whether our detection stack, including Sophos MDR, had visibility of the techniques used. In a traditional pentest, this handoff typically requires weeks of report writing and knowledge transfer before defenders can begin validating their coverage. Here, we could move from ‘found it’ to ‘can we detect it?’ almost immediately.

Final thoughts

This successful experiment clearly demonstrated firsthand the complex trade-off that cybersecurity teams are going to have to make. Yes, these tools are dangerous, but not embracing them could be even more dangerous. The world is forging ahead and securing agentic AI is fast becoming the era-defining challenge for the cybersecurity community.

It also showed me that cybersecurity teams are actually better placed than anyone else to be at the forefront of adoption. First, who better to handle a dangerous and powerful tool than operators who naturally think about security at every step of the way? Second, the more firsthand experience cybersecurity professionals have in this domain, the more chance that they can predict where things are going, where the right control points are and, as I mentioned in my previous article, what practical risk management looks like in practice.